So a bunch of glowing reviews by elite reviewers might make another elite reviewer more likely to post a positive review, too-or a negative one. There's peer pressure on Yelp, and elite Yelpers worry about their status. "On Yelp, Elites are given the same weight but they give better reviews," Luca says. But there's no way under a simple weight average system to give these reviews more collective credence than others. Yelp's cadre of so-called elite reviewers have higher status on the site (They receive this status from Yelp based on their compliments sent to other Yelpers, whether they vote for reviews that are Useful, Funny, and Cool, and if they consistently post respectful and quality content). Still others are erratic, showing little predictability in how they review. Some reviewers are always critical and leave worse reviews on average others might always be positive, fawning over every restaurant's spaghetti or tuna sub. The new ratings framework takes into account: They used the site's history of reviews to identify factors considered by reviewers-the accuracy of a reviewer can be determined, for example, by studying how far that person's opinions stray from the long-running average of the restaurants they review. Luca's team decided to try to develop what they believed would be an optimal way to construct overall ratings on Yelp. Moreover, they don't account for observable patterns in the way individuals rate restaurants." "Essentially, they treat a restaurant that gets three five-star reviews followed by three one-star reviews the same way that they treat a restaurant that gets three one-star reviews followed by three five-star reviews.

"Arithmetic averages are only optimal under very restrictive conditions, such as when reviews are unbiased, independent, and identically distributed signals of true quality," he says. The problem, Luca says, is that star systems such as these aren't as accurate, or optimal, as they could be.

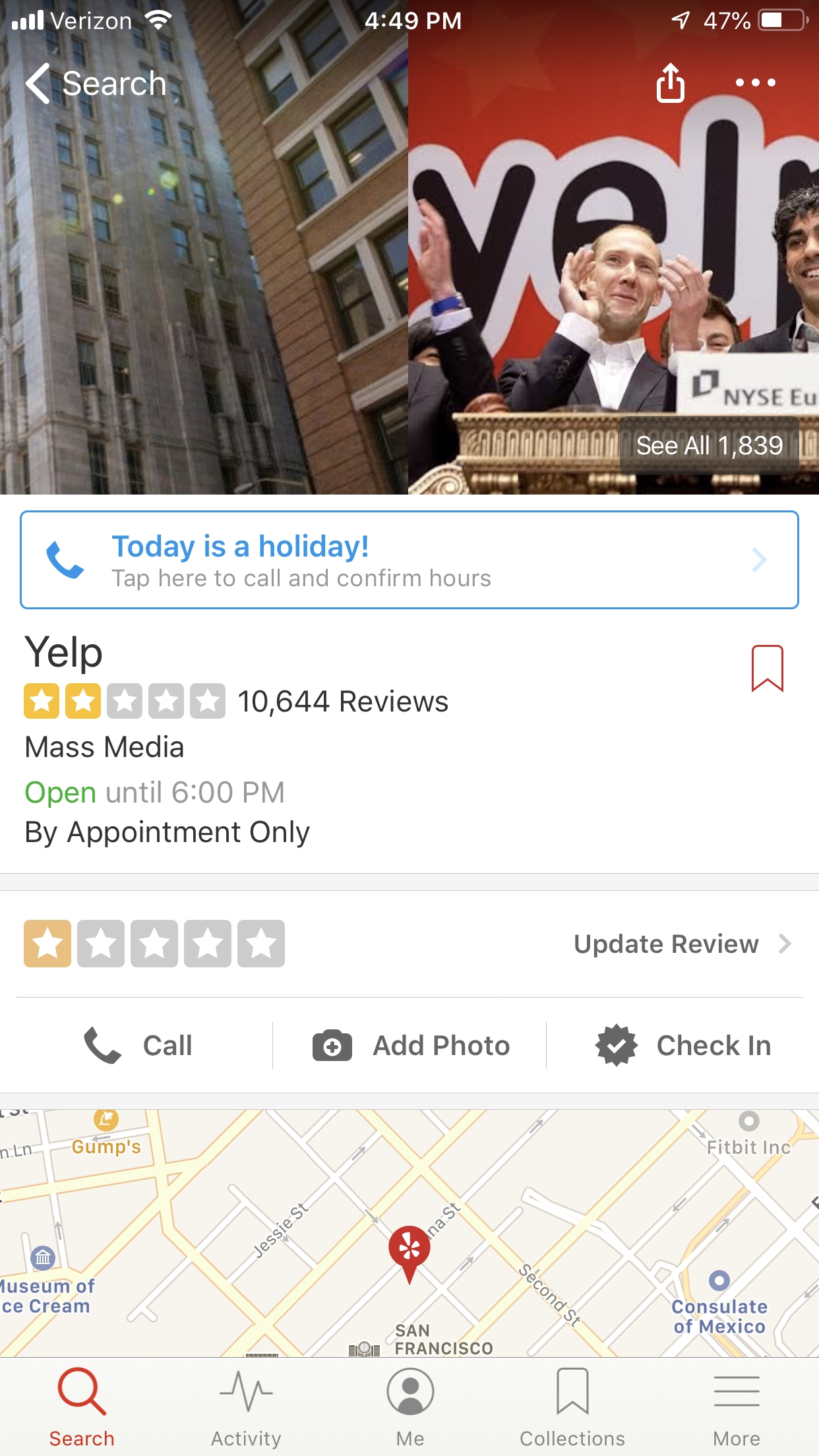

Yelp (and many other review websites) aggregate information by giving an arithmetic average rating based on collective reviews. Do their reviews get more weight? Who decides whether one review is unbiased or more thorough than another review?" "I saw that some reviewers were better at reviewing than others. (The research is a follow-up to Luca's 2011 paper Reviews, Reputation and Revenue: The Case of, which explored how restaurant reviews on Yelp affect their bottom lines.) Reviewing the reviewersĪfter spending some time on Yelp, Luca questioned whether the site's star-based rating system (five stars for the best) truly reflected the quality of the reviewed products and businesses. Luca co-wrote the paper, Optimal Aggregation of Consumer Ratings: An Application to, with Weijia Dai, of the University of Maryland Jungmin Lee, of Sogang University and the Institute for the Study of Labor and Ginger Jin, of the University of Maryland and the National Bureau of Economic Research.

The framework relies on an algorithm set up to tackle bias inherent in reviews by taking into account reviewers who vary in accuracy, stringency, and by reputation. Michael Luca, an assistant professor at Harvard Business School, believes a review framework developed with colleagues could provide better information on sites such as Yelp, eBay, and TripAdvisor.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed